Mock-up Azure VMware Solution in Hub-and-Spoke topology – Part 3

Overview

In the previous blog posts (part 1 and part 2), we covered the deployment of the basic components of an Hub and Spoke topology including an Azure VMware Solution (AVS) deployment.

In the first post we deployed and configured a mockup of Hub and Spoke environment. In the second post we connected the AVS environment to an AVS transit vNet and advertised a default route to the AVS workload. This default route was not yet using our hub-vna appliance but an appliance deployed in the avs-transit-vnet to reach out Internet.

In this step, we will integrate our avs-transit-vnet within the overall h&s topology and rely on the hub-nva VM to manage all the required filtering either for:

- Spoke-to-spoke

- Spoke-to-On-Premise (and vice versa)

- Internet breakout

As we want AVS to behave like a spoke, we will apply this rule to the avs-transit-vnet too.

As a reminder, the components and network design described in this blog post are only for demonstration purposes. They are not intended to be used in a production environment and does not represent Azure best practices. They are provided as-is for mock-up and learning purposes only.

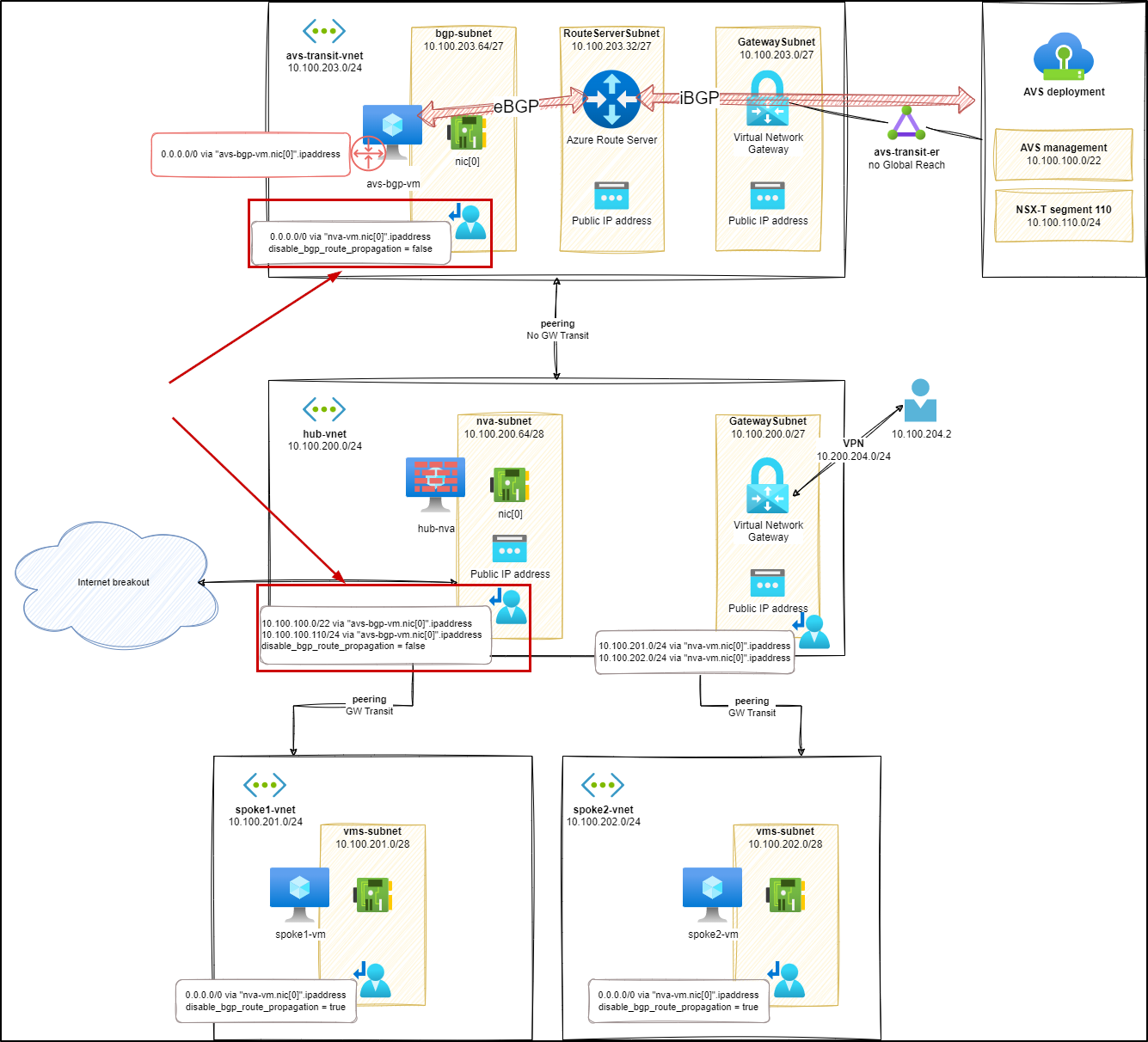

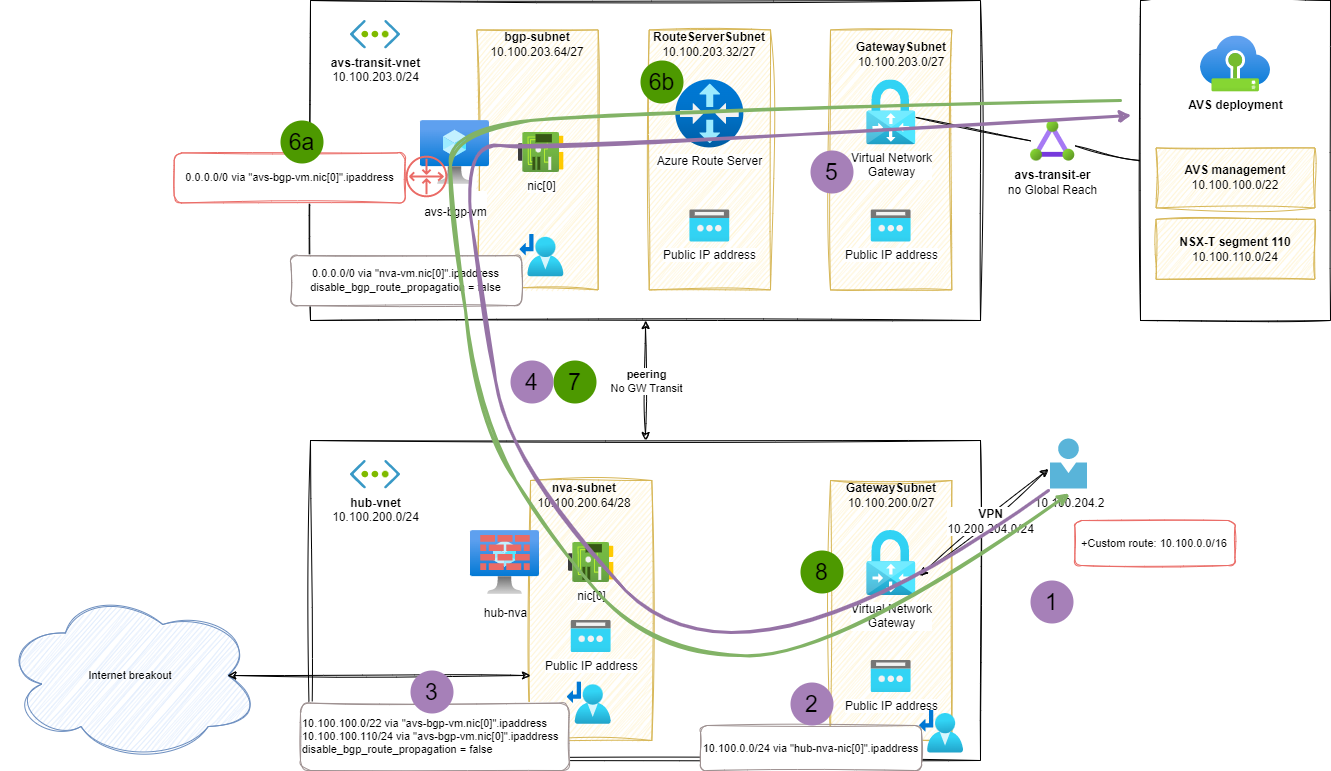

Stage 7 – Advertise the hub default routes to AVS

First thing, we need to add some routes in a new UDR applied to the nva-subnet. The added routes will ensure that hub-nva will be able to propagate AVS related traffic through the avs-bgp-vm. In out lab setup, we do it for 2 prefix:

- AVS management:

10.100.100.0/22 - AVS workload:

10.100.110.0/24

We also need to update the UDR applied to the bgp-subnet within avs-transit-vnet to add the default route via the hub-nva appliance.

Routes analysis (s7)

We can check the new routes, applicable to the hub-nva NIC:

1az network nic show-effective-route-table \

2 --ids /subscriptions/<sub-id>/resourceGroups/nva-testing-RG/providers/Microsoft.Network/networkInterfaces/hub-nva-nic \

3 -o table

4Source State Address Prefix Next Hop Type Next Hop IP

5-------- ------- ---------------- ---------------- -------------

6Default Active 10.100.200.0/24 VnetLocal

7Default Active 10.100.202.0/24 VNetPeering

8Default Active 10.100.201.0/24 VNetPeering

9Default Active 10.100.203.0/24 VNetPeering

10Default Active 0.0.0.0/0 Internet

11...

12User Active 10.100.110.0/24 VirtualAppliance 10.100.203.68 # <--- AVS workload

13User Active 10.100.100.0/22 VirtualAppliance 10.100.203.68 # <--- AVS management

UDR in AVS transit bgp-subnet

As we want to rely on hub-nva for spoke-to-spoke and Internet breakout, we need to change the UDR applied to the bgp-subnet within avs-transit-vnet to ensure going through hub-nva.

And the result on effective routes:

1az network nic show-effective-route-table \

2 --ids /subscriptions/<sub-id>/resourceGroups/nva-testing-RG/providers/Microsoft.Network/networkInterfaces/avs-bgp-nic \

3 -o table

4Source State Address Prefix Next Hop Type Next Hop IP

5--------------------- ------- ----------------- --------------------- -------------

6Default Active 10.100.203.0/24 VnetLocal

7Default Active 10.100.200.0/24 VNetPeering

8VirtualNetworkGateway Active 10.100.100.64/26 VirtualNetworkGateway 10.24.132.60

9VirtualNetworkGateway Active 10.100.109.0/24 VirtualNetworkGateway 10.24.132.60

10VirtualNetworkGateway Active 10.100.101.0/25 VirtualNetworkGateway 10.24.132.60

11VirtualNetworkGateway Active 10.100.100.0/26 VirtualNetworkGateway 10.24.132.60

12VirtualNetworkGateway Active 10.100.110.0/24 VirtualNetworkGateway 10.24.132.60

13VirtualNetworkGateway Active 10.100.111.0/24 VirtualNetworkGateway 10.24.132.60

14VirtualNetworkGateway Active 10.100.113.0/24 VirtualNetworkGateway 10.24.132.60

15VirtualNetworkGateway Active 10.100.114.0/24 VirtualNetworkGateway 10.24.132.60

16VirtualNetworkGateway Active 10.100.100.192/32 VirtualNetworkGateway 10.24.132.60

17VirtualNetworkGateway Active 10.100.103.0/26 VirtualNetworkGateway 10.24.132.60

18VirtualNetworkGateway Active 10.100.101.128/25 VirtualNetworkGateway 10.24.132.60

19VirtualNetworkGateway Active 10.100.102.0/25 VirtualNetworkGateway 10.24.132.60

20VirtualNetworkGateway Invalid 0.0.0.0/0 VirtualNetworkGateway 10.100.203.68

21User Active 0.0.0.0/0 VirtualAppliance 10.100.200.68 # <--- Default route via hub-nva

In the last line we can see that the default route is now going by the hub-nva VM (10.100.200.68).

Tests (s7)

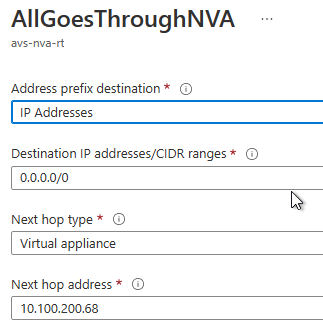

At this stage, the traffic from and to AVS will go through the hub-nva and the avs-bgp-vm. We can easily check this, either by snooping on each routing appliance or by looking at the result of a traceroute to a spoke VM:

1ubuntu@avs-vm-100-10:~$ mtr 10.100.201.4 --report

2HOST: avs-vm-100-10 Loss% Snt Last Avg Best Wrst StDev

3 1.|-- _gateway 0.0% 10 0.2 0.2 0.1 0.2 0.0

4 2.|-- 100.64.176.0 0.0% 10 0.2 0.2 0.2 0.3 0.0

5 3.|-- 100.72.18.17 0.0% 10 0.8 0.8 0.7 1.1 0.1

6 4.|-- 10.100.100.237 0.0% 10 1.1 1.2 1.1 1.4 0.1

7 5.|-- ??? 100.0 10 0.0 0.0 0.0 0.0 0.0

8 6.|-- 10.100.203.68 0.0% 10 3.1 3.1 2.7 5.6 0.9 # <--- avs-bgp-vm

9 7.|-- 10.100.200.68 0.0% 10 4.5 4.8 3.4 7.6 1.6 # <--- hub-nva

10 8.|-- 10.100.201.4 0.0% 10 4.1 6.6 4.1 9.3 1.8 # <--- spoke-vm

Here we can see on hop 6 and 7, the IP address of avs-bgp-vm (10.100.203.68) and hub-nva (10.100.200.68). We can reproduce the same behavior with Internet targets.

Unfortunately, from on premises (VPN) resources, both avs-transit-vnet and AVS resources are not available as there is no route advertised for those targets.

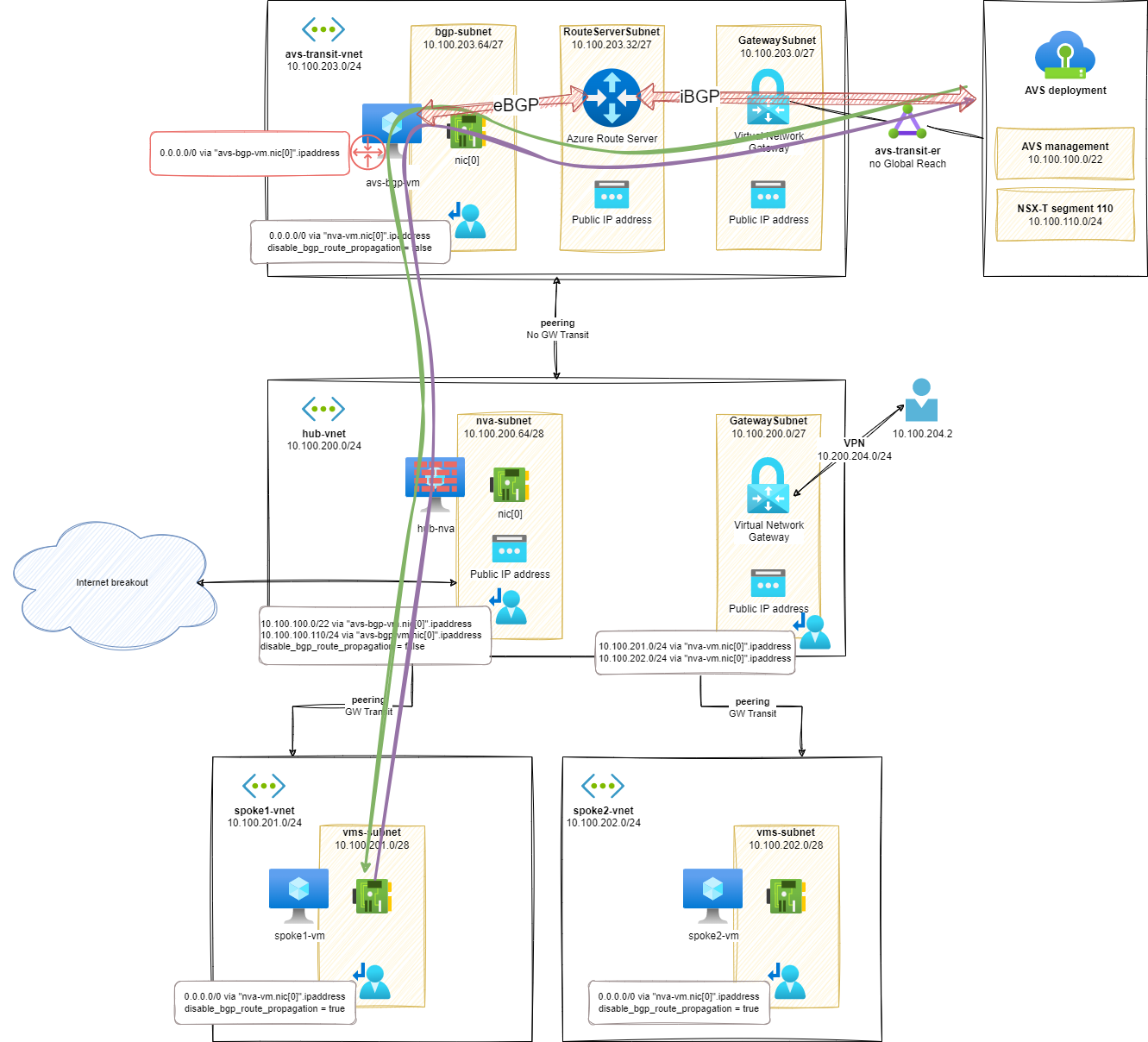

Stage 8 – AVS from on-Premises

As we discovered in the previous step, our on-premises resources do not have any route advertised to communicate with either avs-transit-vnet or AVS resources.

We will mitigate this lack in the current step by adding:

- New routes for

avs-transit-vnetand AVS resources in theGatewaySubnetUDR.- To simplify the routes in my lab setup, I advertise the global prefix of my Azure resources (including AVS ones) in a single route:

10.100.0.0/16 - This UDR will be used by the VPN gateway to find a network path to the resources.

- To simplify the routes in my lab setup, I advertise the global prefix of my Azure resources (including AVS ones) in a single route:

- A custom route in the VPN configuration to specify to VPN clients that the network traffic for the target resources should be going through the VPN.

- As for the UDR, I simplify the custom route announcement in my setup by using a global prefix for all the resources:

10.100.0.0/16

- As for the UDR, I simplify the custom route announcement in my setup by using a global prefix for all the resources:

Tests (s8)

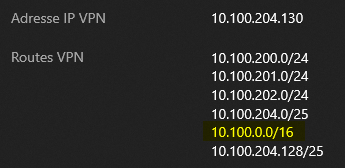

From the VPN client, it is easy to see the custom route (10.100.0.0/16) added to the VPN path:

And if we check the connectivity from a P2S VPN client with an AVS based VM:

1ubuntu@vpn-client:~$ ping 10.100.110.10 -c3

2# output

3PING 10.100.110.10 (10.100.110.10) 56(84) bytes of data.

464 bytes from 10.100.110.10: icmp_seq=1 ttl=57 time=52.3 ms

564 bytes from 10.100.110.10: icmp_seq=2 ttl=57 time=30.1 ms

664 bytes from 10.100.110.10: icmp_seq=3 ttl=57 time=52.9 ms

7--- 10.100.110.10 ping statistics ---

83 packets transmitted, 3 received, 0% packet loss, time 2003ms

9rtt min/avg/max/mdev = 30.097/45.119/52.947/10.625 ms

Routes analysis (s8)

In this on-premises to AVS exchange, the following routing components are used:

- On VPN client side, the custom route advertises a path for the resources matching the Azure global prefix and/or AVS resources

- The VPN gateway relies on its attached UDR to use the

hub-nvaas next hop - The

hub-nvarelies on its UDR to find a path to AVS based resources using theavs-bgp-vmas a next hop - The vNET peering enables the communication between resources from

hub-vnetandavs-transit-vnet - In the

avs-transit-vnet, the path to AVS resources is directly advertised from the Express Route Gateway linked to AVS and propagating the AVS BGP routes.

In the opposite direction:

- The combination of

avs-gbp-vmand Azure Route server provides a default (0.0.0.0/0) route for the AVS based workload:- 6a) The default route is announced over BGP from the

avs-transit-vnet - 6b) The Azure Route Server propagates the route advertisement in the Azure SDN and the route can be advertised to the AVS workload through the Express Route circuit

- 6a) The default route is announced over BGP from the

- The vNET peering enables the communication between resources from

hub-vnetandavs-transit-vnet - In the

hub-vnet, the path to VPN based workload is directly advertised from the VPN Gateway.

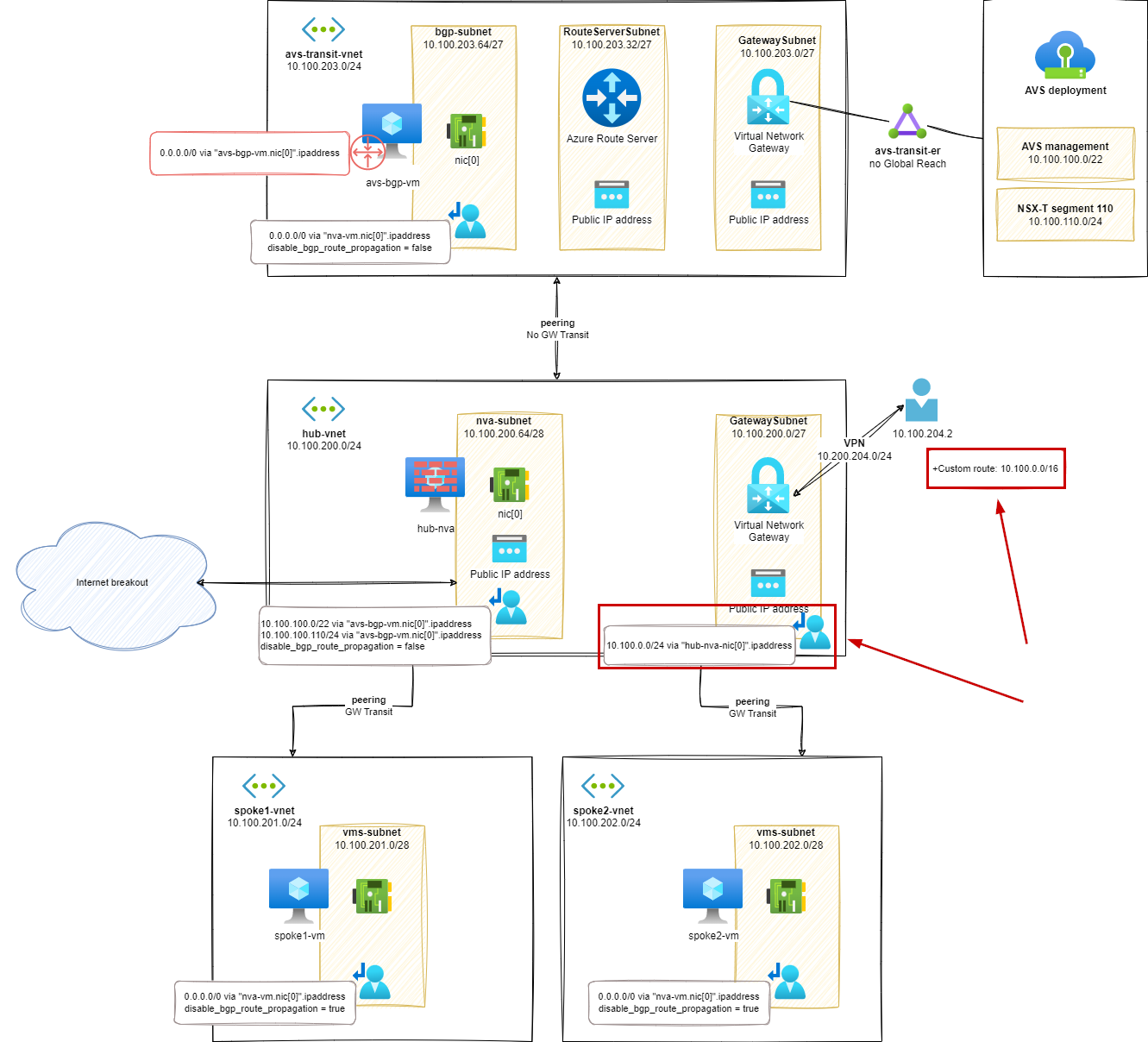

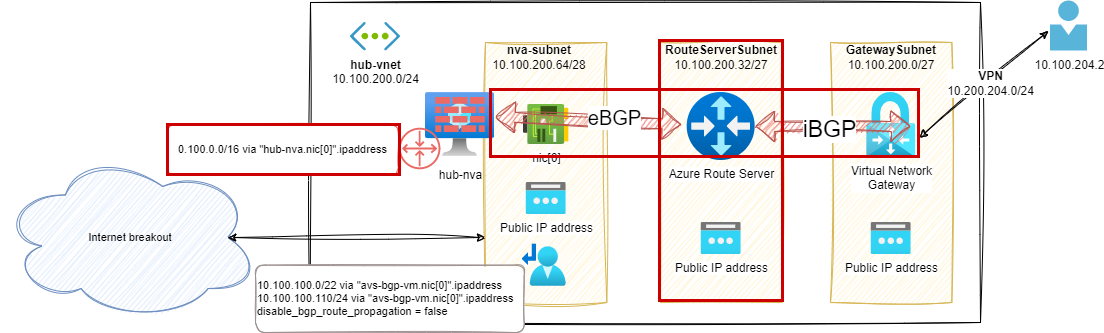

Stage 9 – Azure Server in the hub vNet

It is possible to use a combination of the hub-nva BGP capabilities and Azure Route Server to advertise network prefixes used in Azure to the VPN Virtual Network Gateway and avoid maintaining the Route Table of the GatewaySubnet.

Compared to the previous setup, we removed:

- Custom route in the VPN configuration

- UDR attached to the GatewaySubnet

And we added:

- A new Azure Route Server

- A BGP route advertised from

hub-nva(I used the global Azure Prefix of the lab but this can be more specifics announcements) - A BGP peering between

hub-nvaand the Azure Route Server

Routes analysis (s9)

From Azure Route Server, we can see that BGP peer is injecting the expected route:

1$routes = @{

2 RouteServerName = 'HubRouterServer'

3 ResourceGroupName = 'nva-testing-RG'

4 PeerName = 'hub-rs-bgp-connection'

5}

6Get-AzRouteServerPeerLearnedRoute @routes | ft

7# output

8LocalAddress Network NextHop SourcePeer Origin AsPath Weight

9------------ ------- ------- ---------- ------ ------ ------

1010.100.200.36 10.100.0.0/16 10.100.200.68 10.100.200.68 EBgp 65001 32768

1110.100.200.37 10.100.0.0/16 10.100.200.68 10.100.200.68 EBgp 65001 32768

From the VPN gateway, we can also see the advertised routes:

1az network vnet-gateway list-learned-routes -n hub-vpn-gateway -g nva-testing-RG -o table

2# output

3Network NextHop Origin SourcePeer AsPath Weight

4----------------- ------------- -------- ------------- -------- --------

510.100.200.0/24 Network 10.100.200.5 32768

610.100.201.0/24 Network 10.100.200.5 32768

710.100.202.0/24 Network 10.100.200.5 32768

810.100.204.0/25 Network 10.100.200.5 32768

910.100.204.128/25 10.100.200.4 IBgp 10.100.200.4 32768

1010.100.204.128/25 10.100.200.4 IBgp 10.100.200.36 32768

1110.100.204.128/25 10.100.200.4 IBgp 10.100.200.37 32768

1210.100.0.0/16 10.100.200.68 IBgp 10.100.200.36 65001 32768 # <--- BGP route from hub-nva

1310.100.0.0/16 10.100.200.68 IBgp 10.100.200.37 65001 32768 # <--- BGP route from hub-nva

1410.100.200.0/24 Network 10.100.200.4 32768

1510.100.201.0/24 Network 10.100.200.4 32768

1610.100.202.0/24 Network 10.100.200.4 32768

1710.100.204.128/25 Network 10.100.200.4 32768

1810.100.204.0/25 10.100.200.5 IBgp 10.100.200.5 32768

1910.100.204.0/25 10.100.200.5 IBgp 10.100.200.36 32768

2010.100.204.0/25 10.100.200.5 IBgp 10.100.200.37 32768

2110.100.0.0/16 10.100.200.68 IBgp 10.100.200.36 65001 32768 # <--- BGP route from hub-nva

2210.100.0.0/16 10.100.200.68 IBgp 10.100.200.37 65001 32768 # <--- BGP route from hub-nva

And from the VPN client, the route is also available among the ones from vNET peerings:

Tests (s9)

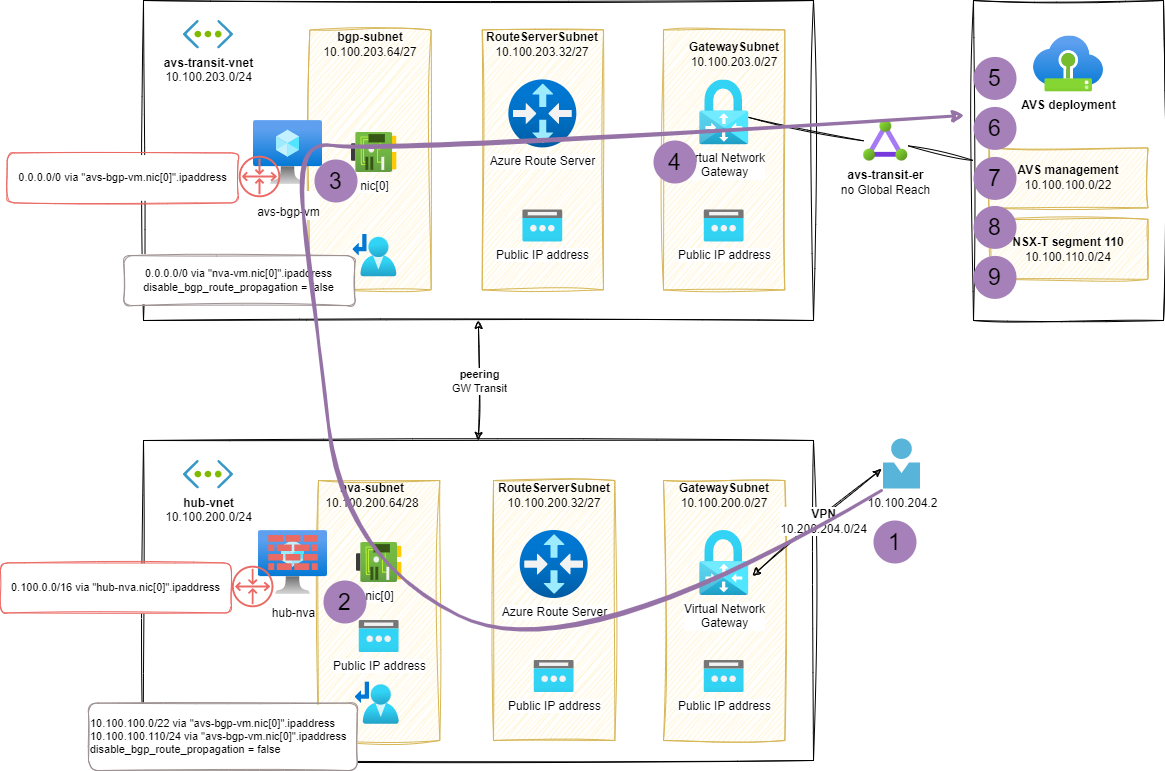

When reaching an AVS based VM from a VPN client we can see the components of our topology:

1ubuntu@vpn-client:~$ mtr 10.100.110.10 -r

2# output

3Start: 2023-02-09T17:21:35+0100

4HOST: vpn-client Loss% Snt Last Avg Best Wrst StDev

5 1.|-- vpn-client 0.0% 10 0.5 0.5 0.3 1.0 0.3

6 2.|-- 10.100.200.68 0.0% 10 21.0 53.3 20.6 307.4 89.7

7 3.|-- 10.100.203.68 0.0% 10 25.4 44.5 21.6 207.4 57.8

8 4.|-- 10.100.203.4 0.0% 10 36.0 42.4 21.9 120.7 29.9

9 5.|-- 10.100.100.233 0.0% 10 54.0 32.0 23.8 59.9 13.5

10 6.|-- 10.100.100.65 0.0% 10 49.3 39.8 24.6 55.8 12.5

11 7.|-- ??? 100.0 10 0.0 0.0 0.0 0.0 0.0

12 8.|-- ??? 100.0 10 0.0 0.0 0.0 0.0 0.0

13 9.|-- 10.100.110.10 0.0% 10 60.5 37.4 22.7 64.9 18.2

I matched the hop numbers of mtr trace in the following diagram (considering a set of hops are part of the AVS network stack and not documented here):

Additional information about AVS connectivity with Azure Route Server

If you need to learn more about Azure Route Server, I strongly recommend you to read the following posts:

- Azure Route Server: to encap or not to encap, that is the question and Azure Firewall’s sidekick to join the BGP superheroes by Jose Moreno

- NVA Routing 2.0 with Azure Route Server, VxLAN (or IPSec) & BGP by Cynthia Treger

- Azure Route Server in Azure documentation

Part 3 – Conclusion

In the last 3 posts of this series we covered a lot of topics related to Azure networking in the context of adopting a Hub & Spokes topology with an Azure VMware Solution deployment.

We created this mockup setup step-by-step to demonstrate the capabilities of some Azure products (like Azure Route Server) and features (like route propagation in UDRs).

In the last step we have a working setup with a central hub-nva VM able to route and filter traffic from/to the AVS environment, spokes vNets, and the on-premises resources.

Note: the setup described in this post is not meant to be used in production due to the lack of high availability and redundancy of the components. It is just a mockup to demonstrate the capabilities of Azure networking products and features.

I hope you enjoyed this series and learned something new about Azure networking. There will probably be more posts to extend this series in the future with new topics and use cases like:

- High availability and redundancy of the components

- Dynamic routing with BGP between the

hub-nvaand theavs-spoke-nva

See you in the next posts!